How to Search for Specific Moments in Long Videos (and Turn Them Into Structured Data)

1. Video Is No Longer Just Media — It Is Data

If you have a 10-hour security feed, a recorded drone inspection, or a series of focus group recordings, you don’t just have a video file — you have a dataset.

Traditionally, finding a specific moment required manual scrubbing: dragging the timeline, pausing repeatedly, and hoping not to miss the relevant frame.

This approach creates major inefficiencies:

- No structured overview of the video content

- No ability to quantify object occurrences

- No searchable relational structure

- No integration with analytical workflows

Modern organizations need more than playback. They need structured video intelligence.

VideoSenseAI transforms video into searchable, analyzable, structured data. Learn more in our technical overview of AI Video Analysis.

2. Beyond the Timeline: Visual Aggregates and Interactive Analytics

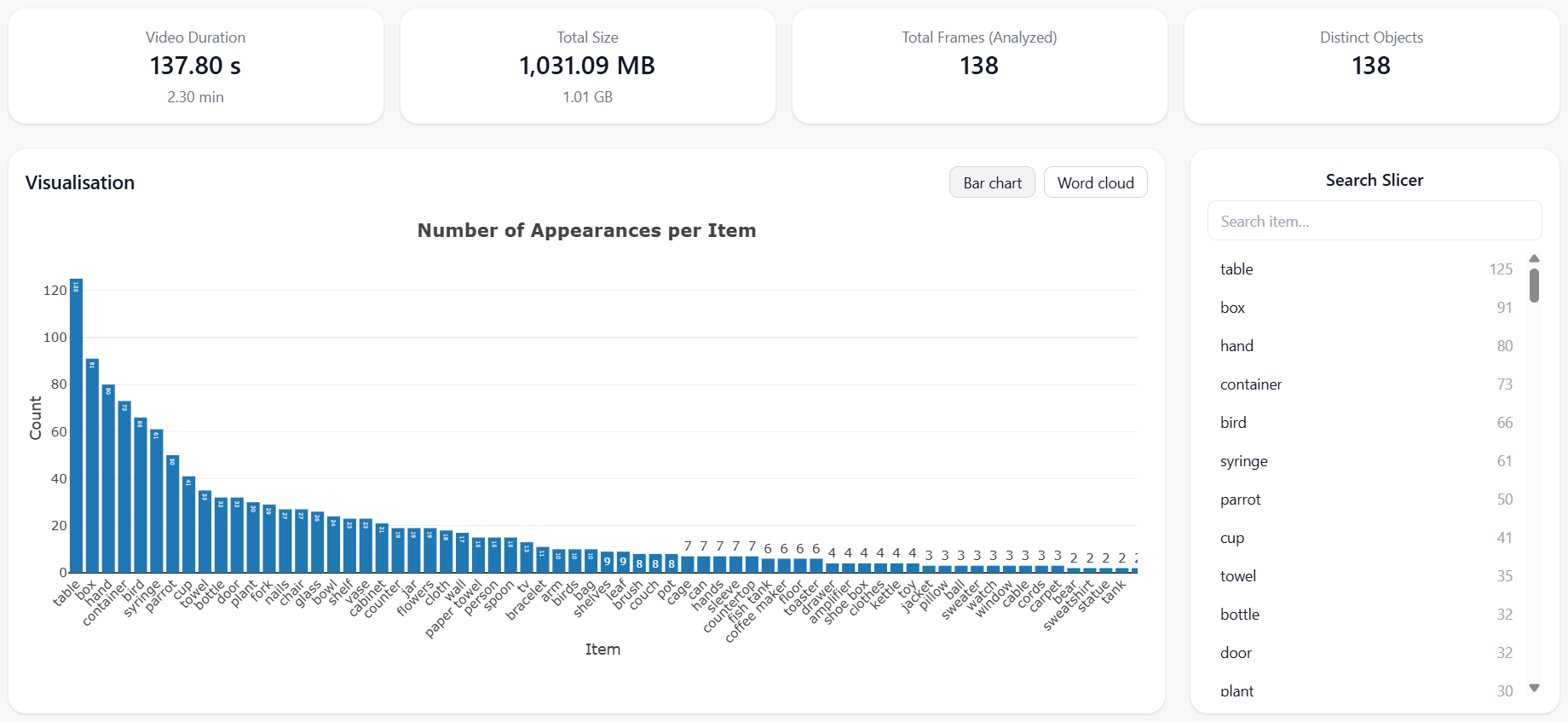

Instead of forcing users to manually explore footage, VideoSenseAI generates high-level visual analytics automatically.

These visual summaries provide an immediate understanding of the video’s structure and content distribution:

- Bar charts showing object frequency across the full video duration

- Word frequency charts derived from audio transcription

- Interactive slicers for filtering detected objects

- Timeline previews of indexed frames

This transforms video into an interactive analytical dashboard — similar to tools such as Power BI or Excel, but built specifically for visual media.

To explore this indexing concept further, visit our guide on Search Inside Videos.

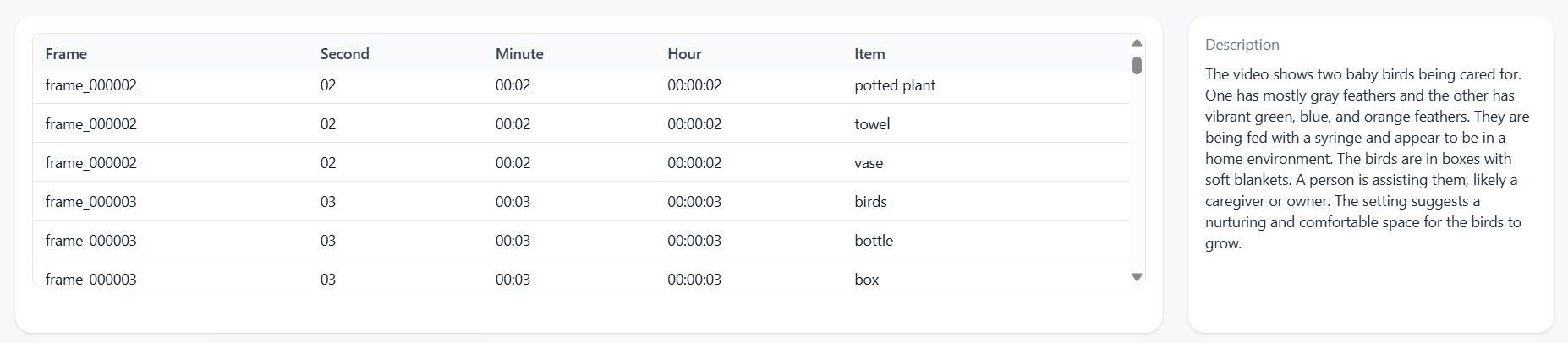

3. Transforming Video Into Structured Relational Tables

One of the most powerful capabilities of VideoSenseAI is the Structured Data Export.

Instead of remaining an unstructured file, the video is converted into a relational dataset with timestamp-level granularity.

By converting visual content into rows and columns, video becomes compatible with analytical workflows, reporting systems, and auditing processes.

Data can be exported as CSV or JSON and integrated into business intelligence systems.

4. Element-Based Filtering: Jump Directly to Relevant Moments

VideoSenseAI indexes every detected object as an individual data point.

This enables instant filtering based on object presence.

Instead of reviewing footage sequentially, users can:

- Filter for specific detected objects

- Jump directly to relevant timestamps

- View only frames containing selected elements

- Verify visual events instantly

This indexing capability effectively turns your video into a fully functional Video Search Engine.

5. AI Summaries and Transcript-Level Intelligence

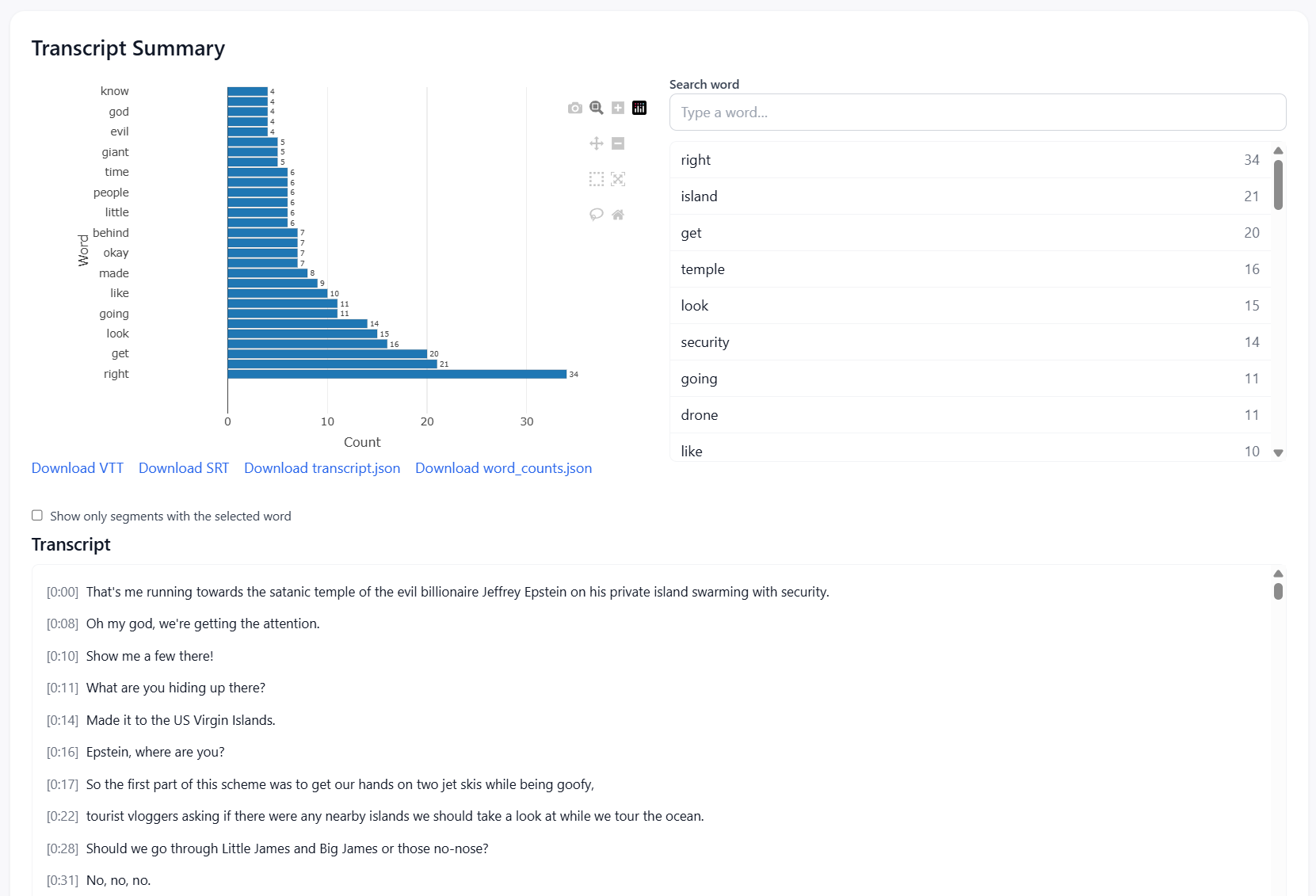

VideoSenseAI enhances structured visual data with audio transcription analysis and AI-generated summaries.

This includes:

- Full transcript generation

- Word frequency analytics

- Topic clustering across conversations

- Timestamp-synchronized transcript navigation

Every word is linked to its exact visual context, allowing users to correlate spoken content with detected objects and events.

6. Why Structured Video Intelligence Matters for Modern Organizations

By transforming raw footage into structured data, organizations unlock measurable operational advantages.

Key benefits include:

- Reduce manual review time by up to 90%

- Quantify object occurrences with precise counts

- Standardize reporting using structured datasets

- Integrate video intelligence into analytics systems

Instead of relying on subjective observation, decisions can be based on measurable evidence.

7. Conclusion: From Passive Footage to Active Intelligence

Traditional video files are passive assets. They require manual effort to extract value.

VideoSenseAI transforms video into:

- Searchable object indexes

- Structured relational datasets

- Interactive visual analytics

- Exportable intelligence reports

Instead of watching video, organizations can analyze it — faster, more accurately, and at scale.

Explore more: